The Human Credential: Biometrics Use in the Security Industry

Published on June 4, 2021 by Jon Polly

The security industry is continually innovating to meet new and unexpected needs. This is the basis for innovation. One way the security industry has innovated is biometrics. Biometrics have been around for over 30 years; ranging from fingerprint, palm, retinal/iris, and even face-scanning technologies. It wasn't until 2013 that biometrics received a push from an unlikely partner. Apple released the Touch ID on the iPhone 5s, and others, allowing users to unlock their phone using their registered fingerprint. With the public using biometrics to do simple yet secure tasks such as unlocking a phone, the security industry began ramping up biometrics as a credential and investigative tool.

The fact is, that while phones, badges, fobs, keys, and other credentials are easily lost, forgotten, or just not carried, biometrics are with the user at all times. Biometrics are also specific to a single user. No one has the same biometric data as the next person; even identical twins are not totally identical. Scars on a face, minor fluctuations in voice, even fingerprints, finger vein, and palm prints are all different. However, identical twins can present a problem for many biometrics because many are based on a probability scale, and this must be addressed.

History of Biometrics

The first biometrics were considered clunky. This was because the data transmission was slow, and the compute power to process the biometrics was intense, resulting in the first biometrics devices to be large boxes on the wall for fingerprint or iris reads. Massive servers were needed to run single cameras for face matching, let alone facial recognition or facial authentication (using the face as a credential). Additionally, the cost to implement biometrics was outside the budget of most companies. Also, the original biometrics could be circumvented with minimal effort. The use of a person's picture, a fingerprint on a glove, or even a recording of a voice could defeat the first biometrics.

Biometrics Today

Today's biometrics are much better than the biometrics of the past. Speed of data transmission has increased and computer power allows many biometrics to happen instantaneously at the network's edge. If the application is server-based, it can run many biometrics on many sensors at the same time. Many of the biometrics now have a "liveness" feature built in to prevent defeat. As an example, facial authentication now uses cameras with depth perception as well as artificial intelligence (AI) neural network inferences to teach the system how to be better. Iris scanners can determine, for instance, if an eye is no longer attached to a body. Voice biometrics track not only the voice, but the wavelength of the voice, and present a randomized phrase of a specific length to determine the accuracy of the voice.

The Legal Ramifications of Biometrics

It seems like the evening news is frenzied over biometrics in today's world. Lawmakers are limiting or banning biometrics at the local, state, and even trying at the federal level. While most of the frenzy revolves around facial recognition, the real-time matching of one to many (1:N) has trickled into conversations of banning facial, iris/retinal, gait (how someone walks), and voice biometrics. A recent article in Security Info Watch discusses this dilemma.

The legal issues are focused around two notions.

- One, can a person opt-in/opt-out of a biometric? This specific issue of "opting-in" was brought to the public's attention when it was reported that Taylor Swift used selfie kiosks for facial recognition to track known stalkers at a concert. In retail stores using biometrics as part of creating a better customer experience, the person could simply choose not to go into the store, or "opt out."

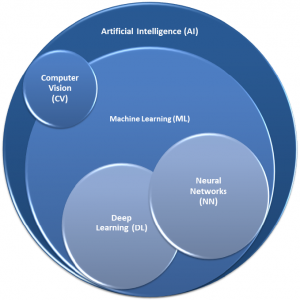

- Two, how should biometrics be trained? As we know, AI is a combination of computer vision (CV), machine learning (ML), neural networks (NN), and deep learning (DL). AI is best as a continuum, like that of interwoven circles, similar to that of a Russian nesting doll. However, the continuum has some quirks. In the illustration below, we see computer vision is shared with AI and ML. Additionally, inside the ML circle is both NN and DL function circles, because these models rely on each other to function. Deep learning is a culmination of all of the above technologies combined.

AI, just like any learned behavior, assures that bad data going in unchecked will bring bad data out. The problem is there are limited standards in existence for how biometrics should be trained and no benchmarks of requirements. Companies are allowed to self-certify their results, and with more and more companies offering biometrics these results are convoluted at best, with their datasets of training being called into question. The argument focuses on biometric bias; if the dataset used to train the system is not completely free of bias, then the biometric tends to identify based on the biased input. Again, bad data going in unchecked will bring bad data out.

While most of the limitations on biometrics apply to public places, in June 2021, Baltimore, MD passed Council Bill 21-0001, creating a ban on private use of facial biometrics to include baby monitors, ADA assistance devices, and even facial recognition to start a car. (Sorry, Tesla). It stopped just short of blocking facial recognition to unlock a smartphone.

Conclusion

The biometrics industry, as it relates to security, is growing. Major manufacturers like Intel, Apple, NEC, Qualcomm, and others are providing innovative technology that will bring more biometrics into businesses. The ease of use coupled with the security of a biometric is driving much of the innovation. With innovation comes noise. A few years ago, there were ten biometric companies. Today, there are over 100, with more entering the marketplace each day. Biometrics could be the next shiny widget requiring due diligence on behalf of the end-user, consultant, or system integrator. Are biometrics the future? Maybe. Are they part of the future? Absolutely.